Define Wokeness! Or how you shall know a word by the company it keeps

Visualizing what words often appear in the vicinity of woke/wokeness in news media content illustrates why communication is almost impossible between red and blue America

The English linguist J. R. Firth famously said:

You shall know a word by the company it keeps (Firth, J. R. 1957:11)

as a way of highlighting the context-dependent nature of meaning, a property widely acknowledged in the field of distributional semantics. Recent advances in machine learning, such as word embeddings, where AI models learn the semantic properties of words by their collocations in large textual corpora have provided supporting evidence for Firth’s hypotheis.

There has been a bit of chatter recently regarding what is the definition of woke/wokeness. I decided to look into this question by using Firth’s framework. Thus, I constructed word embeddings representations from hundreds of thousands of news and opinion articles published by major news outlets over the 2021-2022 period. Without getting into technical details, word embeddings are derived by parsing a large corpus of text and building vector representations of words as a function of what other terms tend to appear in their vicinity or in similar contexts. Thus, this is a useful proxy to estimate what sort of terms often cooccur together or in similar contexts in a body of text.

Specifically, I wanted to check the contexts in which the words woke and wokeness are used in left-leaning and right-leaning news media. Thus, I built two different models, one with news and opinion articles from news outlets classified as left-leaning by AllSides and another model with news and opinion articles from news outlets classified as right-leaning by the same resource.

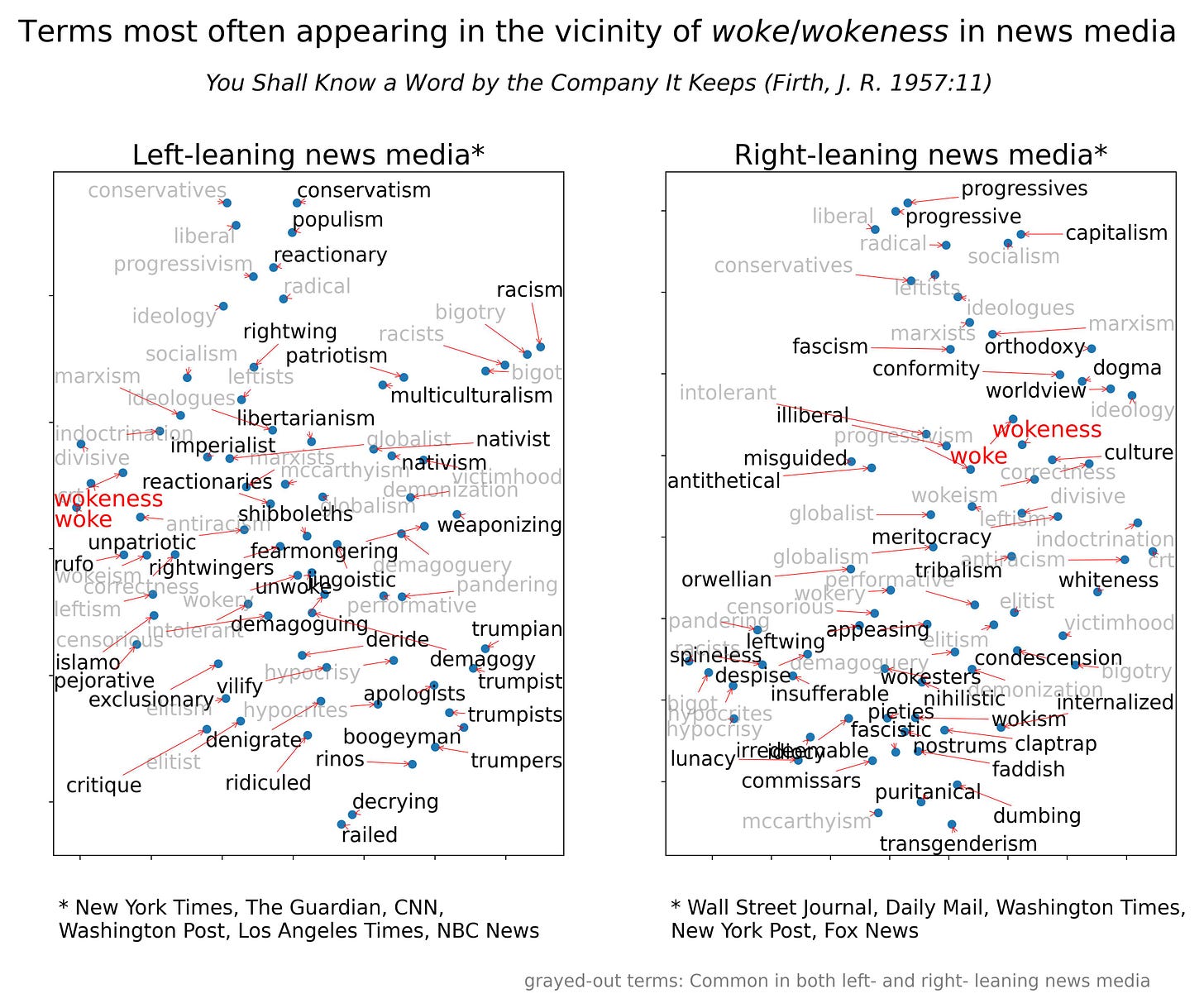

Next, I probed what were the closest vector representations of words to the target terms woke and wokeness in each corpus (left- and right-leaning news media content). That is, what terms most often appear in the vicinity of woke and wokeness. In the following visualization, I grayed-out terms commonly associated with woke/wokeness in both types of outlets to highlight those that are different (in black font color). To reduce noise, I also Porter stemmed all the adjacency terms in each group to flag same stems as overlap. Finally, I applied the dimensionality reduction technique t-distributed stochastic neighbor embeddings (tSNE), to reduce the dimensionality of the vector representations from 300D to 2D while maximizing preservation of geometrical structure. The end result is a visualization of what terms are most often associated with woke/wokeness in left-leaning news media that are not so in right-leaning news media and vice versa.

It is obvious from the plot above that despite some commonalities of terms in the vicinity of woke and wokeness in left- and right-leaning news media, there are also substantial differences.

Left-leaning news media tends to use the terms woke and wokeness in the vicinity of terms such as bogeyman, trumpists, denigrate, deride, vilify, ridiculed, reactionaries, pejorative, fearmongering, demagoguery, and racism.

Right-leaning news media tend to use the terms woke and wokeness in the vicinity of terms such as lunacy, tribalism, transgenderism, nihilistic, illiberal, orthodoxy, dogma, conformity, idiocy, insufferable, puritanical, claptrap, orwellian, fascism and condescension.

If Firth’s hypothesis is correct, and it probably is, red and blue America have very different ideas in mind when they use the terms woke/wokeness. This renders the two groups almost unable to communicate with each other and therefore condemned to mutual incomprehension (as Jonathan Haidt has argued previously).

As I have explained before, the low cost of customizing the political alignment of AI and the potential proliferation of AI systems customized to manifest different political biases, could make the problem describe above even worse as people might come to trust AIs as the “ultimate sources of truth” while gravitating towards those AI systems providing confirmation for their pre-existing beliefs.

AI however also holds the key to a potential solution. Politically neutral AI systems that provide balanced sources and a diverse set of legitimate viewpoints for most normative questions that cannot be conclusively adjudicated and for which a variety of legitimate human opinions exist could facilitate communication between different communities by constructing common reference points along which communication is enabled.

Excellent article, sir. Thank you.

If you really notice, all the terms used by the left are the ones you use when gasliting.